Introduction

Context: Oliver Habryka commissioned me to study and summarize the literature on distributed teams, with the goal of improving altruistic organizations. We wanted this to be rigorous as possible; unfortunately the rigor ceiling was low, for reasons discussed below. To fill in the gaps and especially to create a unified model instead of a series of isolated facts, I relied heavily on my own experience on a variety of team types (the favorite of which was an entirely remote company).

This document consists of five parts:

- Summary

- A series of specific questions Oliver asked, with supporting points and citations. My full, disorganized notes will be published as a comment.

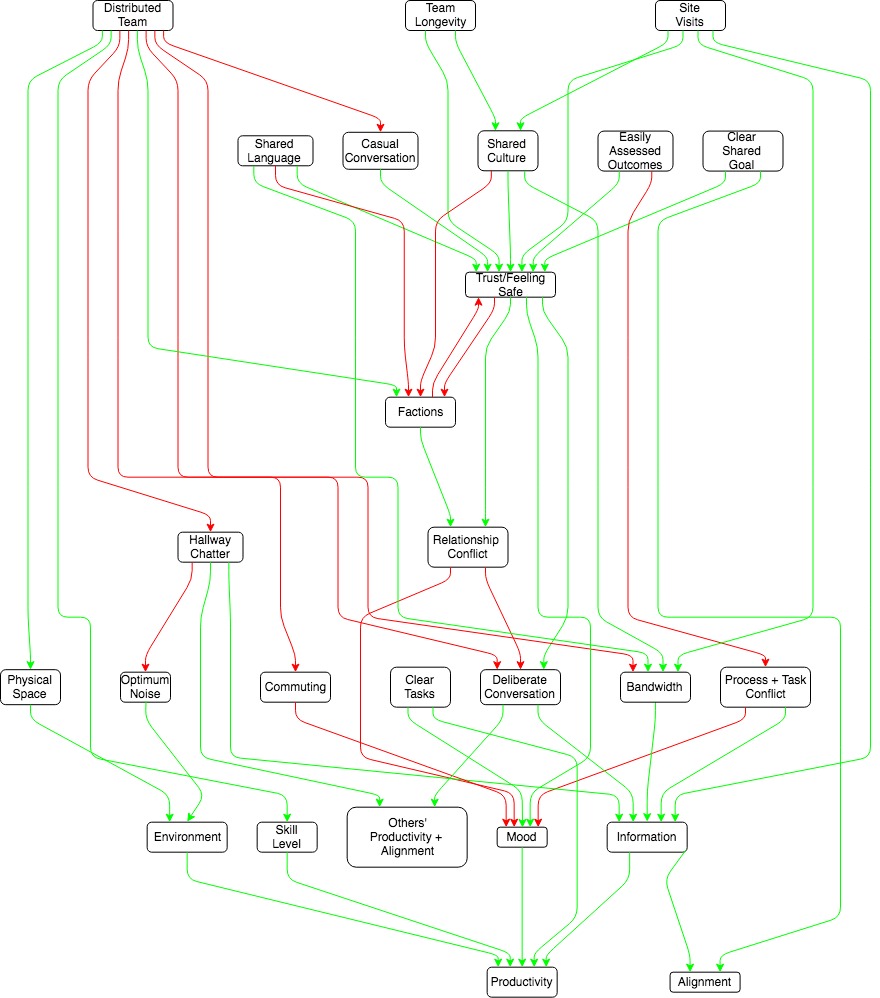

My overall model of worker productivity is as follows:

Highlights and embellishments:

- Distribution decreases bandwidth and trust (although you can make up for a surprising amount of this with well timed visits).

- Semi-distributed teams are worse than fully remote or fully co-located teams on basically every metric. The politics are worse because geography becomes a fault line for factions, and information is lost because people incorrectly count on proximity to distribute information.

- You can get co-location benefits for about as many people as you can fit in a hallway: after that you’re paying the costs of co-location while benefits decrease.

- No paper even attempted to examine the increase in worker quality/fit you can get from fully remote teams.

Sources of difficulty:

- Business science research is generally crap.

- Much of the research was quite old, and I expect technology to improve results from distribution every year.

- Numerical rigor trades off against nuance. This was especially detrimental when it comes to forming a model of how co-location affects politics, where much that happens is subtle and unseen. The most largest studies are generally survey data, which can only use crude correlations. The most interesting studies involved researchers reading all of a team’s correspondence over months and conducting in-depth interviews, which can only be done for a handful of teams per paper.

How does distribution affect information flow?

“Co-location” can mean two things: actually working together side by side on the same task, or working in parallel on different tasks near each other. The former has an information bandwidth that technology cannot yet duplicate. The latter can lead to serendipitous information sharing, but also imposes costs in the form of noise pollution and siphoning brain power for social relations.

Distributed teams require information sharing processes to replace the serendipitous information sharing. These processes are less likely to be developed in teams with multiple locations (as opposed to entirely remote). Worst of all is being a lone remote worker on a co-located team; you will miss too much information and it’s feasible only occasionally, despite the fact that measured productivity tends to rise when people work from home.

I think relying on co-location over processes for information sharing is similar to relying on human memory over writing things down: much cheaper until it hits a sharp cliff. Empirically that cliff is about 30 meters, or one hallway. After that, process shines.

List of isolated facts, with attribution:

- “The mutual knowledge problem” (Cramton 2015):

- Assumption knowledge is shared when it is not, including:

- typical minding.

- Not realizing how big a request is (e.g. “why don’t you just walk down the hall to check?”, not realizing the lab with the data is 3 hours away. And the recipient of the request not knowing the asker does not know that, and so assumes the asker does not value their time).

- Counting on informal information distribution mechanisms that don’t distribute evenly

- Silence can be mean many things and is often misinterpreted. E.g. acquiescence, deliberate snub, message never received.

- Assumption knowledge is shared when it is not, including:

- Lack of easy common language can be an incredible stressor and hamper information flow (Cramton 2015).

- People commonly cite overhearing hallway conversation as a benefit of co-location. My experience is that Slack is superior for producing this because it can be done asynchronously, but there’s reason to believe I’m an outlier.

- Serendipitous discovery and collaboration falls off by the time you reach 30 meters (chapter 5), or once you’re off the same hallway (chapter 6)

- Being near executives, project decision makers, sources of information (e.g. customers), or simply more of your peers gets you more information (Hinds, Retelny, and Cramton 2015)

How does distribution interact with conflict?

Distribution increases conflict and reduces trust in a variety of ways.

- Distribution doesn’t lead to factions in and of itself, but can in the presence of other factors correlated with location

- e.g. if the engineering team is in SF and the finance team in NY, that’s two correlated traits for fault lines to form around. Conversely, having common traits across locations (e.g. work role, being parents of young children)] fights factionalization (Cramton and Hinds 2005).

- Language is an especially likely fault line.

- Levels of trust and positive affect are generally lower among distributed teams (Mortenson and Neeley 2012) and even co-located people who work from home frequently enough (Gajendra and Harrison 2007).

- Conflict is generally higher in distributed teams (O’Leary and Mortenson 2009, Martins, Gilson, and Maynard 2004)

- It’s easier for conflict to result in withdrawal among workers who aren’t co-located, amplifying the costs and making problem solving harder.

- People are more likely to commit the fundamental attribution error against remote teammates (Wilson et al 2008).

- Different social norms or lack of information about colleagues lead to misinterpretation of behavior (Cramton 2016) e.g.,

- you don’t realize your remote co-worker never smiles at anyone and so assume he hates you personally.

- different ideas of the meaning of words like “yes” or “deadline”.

- From analogy to biology I predict conflict is most likely to arise when two teams are relatively evenly matched in terms of power/ resources and when spoils are winner take all.

- Most site:site conflict is ultimately driven by desire for access to growth opportunities (Hinds, Retelny, and Cramton 2015). It’s not clear to me this would go away if everyone is co-located- it’s easier to view a distant colleague as a threat than a close one, but if the number of opportunities is the same, moving people closer doesn’t make them not threats.

- Note that conflict is not always bad- it can mean people are honing their ideas against others’. However the literature on virtual teams is implicitly talking about relationship conflict, which tends to be a pure negative.

When are remote teams preferable?

- You need more people than can fit in a 30m radius circle (chapter 5), or a single hallway. (chapter 6).

- Multiple critical people can’t be co-located, e.g.,

- Wave’s compliance officer wouldn’t leave semi-rural Pennsylvania, and there was no way to get a good team assembled there.

- Lobbying must be based in Washington, manufacturing must be based somewhere cheaper.

- Customers are located in multiple locations, such that you can co-locate with your team members or customers, but not both.

- If you must have some team members not co-located, better to be entirely remote than leave them isolated. If most of the team is co-located, they will not do the things necessary to keep remote individuals in the loop.

- There is a clear shared goal

- The team will be working together for a long time and knows it (Alge, Weithoff, and Klein 2003)

- Tasks are separable and independent.

- You can filter for people who are good at remote work (independent, good at learning from written work).

- The work is easy to evaluate based on outcome or produces highly visible artifacts.

- The work or worker benefits from being done intermittently, or doesn’t lend itself to 8-hours-and-done, e.g.,

- Wave’s anti-fraud officer worked when the suspected fraud was happening.

- Engineer on call shifts.

- You need to be process- or documentation-heavy for other reasons, e.g. legal, or find it relatively cheap to be so (chapter 2).

- You want to reduce variation in how much people contribute (=get shy people to talk more) (Martins, Gilson, and Maynard 2008).

- Your work benefits from long OODA loops.

- You anticipate low turnover (chapter 2).

How to mitigate the costs of distribution

- Site visits and retreats, especially early in the process and at critical decision points. I don’t trust the papers quantitatively, but some report site visits doing as good a job at trust- and rapport-building as co-location, so it’s probably at least that order of magnitude (see Hinds and Cramton 2014 for a long list of studies showing good results from site visits).

- Site visits should include social activities and meals, not just work. Having someone visit and not integrating them socially is worse than no visit at all.

- Site visits are more helpful than retreats because they give the visitor more context about their coworkers (chapter 2). This probably applies more strongly in industrial settings.

- Use voice or video when need for bandwidth is higher (chapter 2).

- Although high-bandwidth virtual communication may make it easier to lie or mislead than either in person or low-bandwidth virtual communication (Håkonsson et al 2016).

- Make people very accessible, e.g.,

- Wave asked that all employees leave skype on autoanswer while working, to recreate walking to someone’s desk and tapping them on the shoulder.

- Put contact information in an accessible wiki or on Slack, instead of making people ask for it.

- Lightweight channels for building rapport, e.g., CEA’s compliments Slack channel, Wave’s kudos section in weekly meeting minutes (personal observation).

- Build over-communication into the process.

- In particular, don’t let silence carry information. Silence can be interpreted a million different ways (Cramton 2001).

- Things that are good all the time but become more critical on remote teams

- Clear goals/objectives

- Clear metrics for your goals/objectives

- Clear roles (Zacarro, Ardison, Orvis 2004)

- Regular 1:1s

- Clear communication around current status

- Long time horizons (chapter 10).

- Shared identity (Hinds and Mortensen 2005) with identifiers (chapter 10), e.g. t-shirts with logos.

- Have a common chat tool (e.g., Slack or Discord) and give workers access to as many channels as you can, to recreate hallway serendipity (personal observation).

- Hire people like me

- long OODA loop

- good at learning from written information

- Good at working working asynchronously

- Don’t require social stimulation from work

- Be fully remote, as opposed to just a few people working remotely or multiple co-location sites.

- If you have multiple sites, lumping together similar people or functions will lead to more factions (Cramton and Hinds 2005). But co-locating people who need to work together takes advantage of the higher bandwidth co-location provides..

- Train workers in active listening (chapter 4) and conflict resolution. Microsoft uses the Crucial Conversations class, and I found the book of the same name incredibly helpful.

Cramton 2016 was an excellent summary paper I refer to a lot in this write up. It’s not easily available on-line, but the author was kind enough to share a PDF with me that I can pass on.

My full notes will be published as a comment on this post.