John: http://gap.hks.harvard.edu/women%E2%80%99s-empowerment-action-evidence-randomized-control-trial-africa

[Women’s Empowerment in Action: Evidence from a Randomized Control Trial in Africa]

Me: That’s awesome. Wait, why are they jumping between percentage points and absolute percentages? And they don’t give the absolute numbers at all.*

John: http://www.ucl.ac.uk/~uctpimr/research/ELA.pdf

Me: Sweet. Wait, so they plopped some afterschool clubs down and then measured outcomes for girls that attended them? That’s a hell of a confound.**

Paper: Nope, this is an RCT, and we compared both attendees and non-attendees (will overestimate impact due to confounds, but miss any spillover affects on non-attendees) and treatment communities with control communities (will underestimate impact because only 20% of girls attended the club, will catch spillover effects).

Me: But mobility is high, what if girls leave the area?

Paper: we track them. Plus attendees, members of treatment communities, and members of control communities had similar attrition rates.

Me: I’m still distraught you’re only giving rates of change, not absolute numbers.

Paper: Jesus Christ, not everyone loves numbers as much as you. The numbers are in the appendix.

Me: This looks like you made it worse.

Paper: Maybe it would help if you read the part that explains how to read the numbers.

Me: Your sexual health knowledge test includes questions like “A woman cannot catch HIV while on her period. T/F”. That’s the opposite of true.

Paper: You see why we’re concerned.

Me: HA! You said you calculated based on living in a treatment area, not participation, but table 2 is contingent on participation.

Paper: Table 2 describes duration and intensity of club attendance.

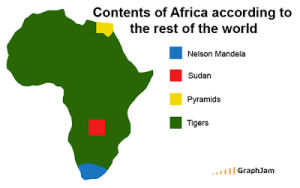

Me: Fine. Your study was perfect and its results are amazing. But you said Africa and the study takes place entirely in Uganda and treating Africa as a uniform mass is racist. Why don’t you just talk about your tiger prevention efficacy?

The paper graciously conceded my last point, but it knew my heart wasn’t in it. There is no end to the number of follow up studies one can suggest, but this is as good as a single study can be, and I accepted their conclusions. Founding afterschool clubs for girls in Uganda, with a mix of social activities and vocational, and health education, has pretty amazing results. $17.90 US (I know the exact number because the paper specifies it, which I love) spent on a girl translates to an additional $1.70 in monthly spending, almost a 50% increase (they tracked spending rather than earnings because self-employment earnings tend to be feast or famine. Employment also went up significantly), and a decrease in rape and child bearing. That means the program pays for itself in less than a year, and they get some additional benefits on the side. And to the researchers’ credit, the abstract trumpeted the less impressive community-wide numbers, when they could just as easily have used the confounded but shiny attendee numbers.

I mention this for two reasons. One: someone found a way to improve the bodily autonomy and earnings of African young women, basically for free. That’s neat. Two, I read this paper the morning after spending hours on a HAES post (which you may or may not ever read because wordpress ate it, thank you very much. WordPress ate this one halfway through too, so what you read is a cliff’s notes version of my original Socratic dialogue). The HAES post was enormously frustrating, because of the two claims I investigated, I found one (that cyclic dieting, rather than current weight, increases blood pressure) to be pretty misprepresentative of the data, and the other (high blood pressure hurts thin people more than fat people) pretty well supported…for a medical claim. By which I meant the evidence came from either retrospective studies (too many confounds to contemplate) or rats specifically bred to have the physical fitness of an aging Tony Soprano. That is genuinely good for medical research, and that fact is really frightening given how much is riding on getting the correct answer.

So when I read this paper, and see the study is well designed, they explain their modeling in a way an educated non-expert can understand, and they refuted every one of my criticisms, I felt a kind of relief. I’m not quite ready to say “trust the experts”, but at least I didn’t spend two hours tracking down reasons to not trust them

*If something goes from 10% to 20%, that’s an increase of 100% but only 10 percentage points. Switching between the two and failing to give the absolute percentages is a common trick for making data look more impressive than it is.

**Confounding variable, i.e. something that varies between your control and treatment group that is not the thing you are studying, and affects outcomes. The most popular confounding variable is time, e.g.

But here I’m worried about motivation: girls who show up to a club to learn entrepreneurial and life skills are probably more likely to start businesses and delay marriage than those that don’t attend,

2 thoughts on “Poverty, Medicine, and Research”

Comments are closed.